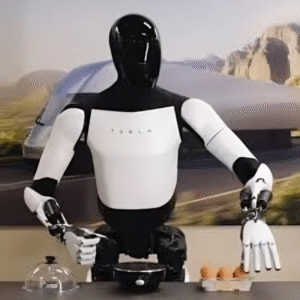

Tesla’s Optimus humanoid robot has taken a major leap in robotic intelligence with the integration of a “world model,” as revealed by Elon Musk. This cutting-edge development allows Optimus to learn directly from human demonstration videos, bridging the gap between observation and autonomous execution in real-world tasks.

Learning from Human Demonstration

Elon Musk shared that Optimus has begun using a world model for training, enabling it to understand and mimic complex tasks by watching humans perform them. This technique eliminates the need for hard-coded instructions or manual teleoperation. According to Musk, Optimus learned its most recent tasks “directly from human videos,” indicating a shift toward vision-language-action integration—a key component in the evolution of autonomous systems.

What is a World Model?

A world model allows a robot to internally simulate its environment and predict outcomes before taking action. For Optimus, this means:

- Interpreting visual input (human activity)

- Predicting task-related consequences

- Executing decisions autonomously without constant recalibration

Such systems have already been explored in generative AI and reinforcement learning, but this may be the first time they’ve been deployed at scale in a humanoid commercial robot.

Toward Cost-Efficient Autonomy

If Tesla continues to refine this approach, Optimus could become a game-changer in industries requiring repetitive manual labor—from manufacturing and logistics to eldercare and domestic help. Training via video significantly reduces development time and cost, allowing companies to scale humanoid robots faster without building custom datasets or task-specific scripts.

The Race for General-Purpose Robots

By introducing a world model, Tesla positions itself at the forefront of general-purpose robotics—competing with the likes of Figure AI, Sanctuary AI, and Boston Dynamics. This move signals a shift from single-task robotics to multi-context, real-world adaptability—a holy grail in the industry.

Share your Details for subscribe

Share your Details for subscribe