Vision Meets Touch for Real-time Dexterity

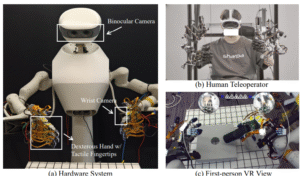

The recently released model ViTacFormer: Learning Cross‑Modal Representation for Visuo‑Tactile Dexterous Manipulation introduces a novel neural architecture that fuses high-resolution visual data and dense tactile sensing to enable agile, adaptive control of anthropomorphic robotic hands. Validated on real-world benchmarks, this architecture now achieves continuous, long-horizon manipulation—an industry first.

Key Architecture & Strengths

- Cross-attention encoder merges visual and tactile streams at each timestep.

- Autoregressive tactile prediction head anticipates future contact dynamics, steering more informed action selection.

- Training leverages a curriculum-based approach that refines representations and boosts robustness across tasks.

Real-World Long-Horizon Demo

ViTacFormer stands as the first system capable of autonomously executing 2.5 minutes of continuous dexterous manipulation using an anthropomorphic robotic hand. The demo completed an 11-stage sequential task—such as assembling a hamburger—under full AI control. Success rates exceeded 80%, compared to near-zero for baselines—even on stages where other systems failed entirely.

This showcases active vision + tactile integration delivering high precision and stability over extended periods using an anthropomorphic end-effector.

Performance Gains & Benchmarking

- ~50% higher success rates than prior state-of-the-art imitation learning systems across standard manipulation tasks like peg insertion, cap twisting, and book flicking.

- The model completed complex, multi-step routines that prior methods could not sustain—demonstrating unmatched long-horizon capability.

Broader Impact: Toward Reliable Embodied AI

- Vision + tactile synergy enables robust performance even in visually occluded or highly dynamic environments.

- Continuous autonomous control unlocks possibilities in warehouse automation, surgical assistive robotics, and other domains requiring fine manipulation.

- The generality of the architecture suggests feasible scaling to varied robotic hardware with tactile integration.

Share your Details for subscribe

Share your Details for subscribe